How to Automate Supply Chain Risk Reports: A Guide for Developers

Do you use Python? If so, this guide will help you automate supply chain risk reports using AI Chat GPT and our News API.

While the topic of free speech, and censorship on social media has continued to dominate public attention over the past year, the biggest shift has been the spike in the attention of organizations toward monitoring extremist and violent activities on unregulated social media platforms, also known as alternative social media.

Before we expand on the latest illicit content trends on alternative social media, it’s important to first understand what we define under this group of platforms. Alternative social media, also known as “Alt-tech” (alternative technology), are social media platforms that work similarly to mainstream social media in their structure and the way they’re used. However, unlike mainstream social media, their alternative counterparts use fewer to no restrictions on the type of content they allow their users to share.

These platforms attracted users who were either banned or supported figures, pages, and groups that were banned on other mainstream services. Because of the lack of content restrictions, alternative social media platforms have also attracted criminals and radicals, who use these platforms to share, provoke, and incite violence and other criminal activities. Today, the user base of alternative social media platforms consists of many alt-right and far-right members that freely spread radical opinions that can lead to criminal behavior in the real world.

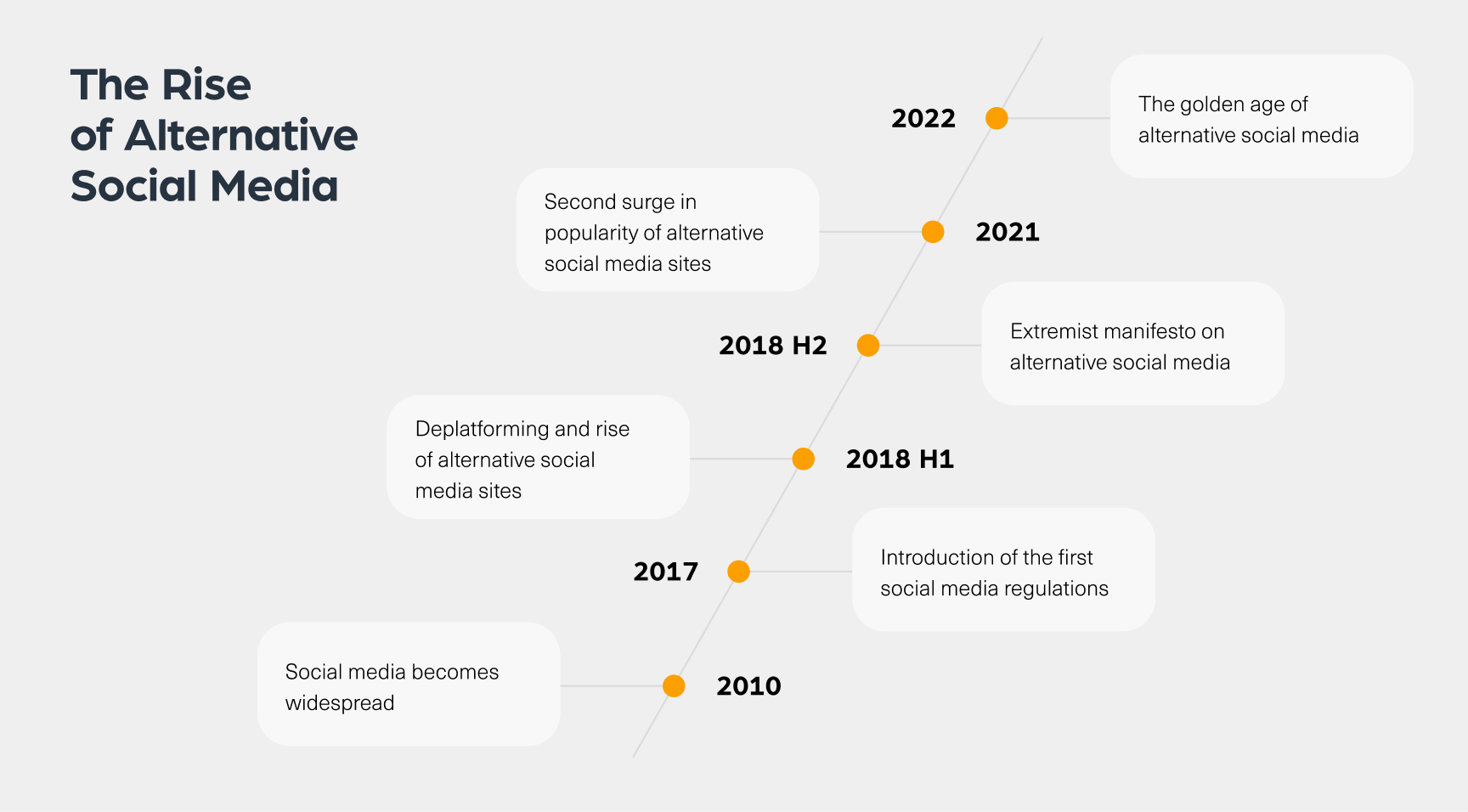

The rise of social media platforms took place around 2010. By 2017, these sites were so widespread that US regulators forced Twitter and Facebook to enforce their own terms of service, which included content restrictions on their users. As a result, some radical organizations and users migrated from mainstream social media platforms to alternative social media sites, where they could freely share any type of content.

The popularity of these sites has seen another major spike in 2018 when a mass shooter named Robert Gregory killed 11 people in a terrorist attack at the Tree of Life synagogue in Pittsburgh. This attack took place after Gregory published his antisemitic manifesto on Gab, one of the most popular alternative social media platforms used by the far right. Following this event, more radical organizations turned to alternative social media platforms.

Another huge surge in the popularity of these sites took place in 2021, around the U.S. election that ended Donald Trump’s presidency. Following the Capitol events, Trump and his followers were banned from mainstream social media platforms such as Facebook, Twitter, and Youtube, which sent waves of radical users to alternative sites.

Today, we can also see more alternative sites that mirror mainstream social media sites. Take for example MEWE, a site that mimics Facebook, or Bitchute, a video-streaming platform that works similarly to YouTube, and Parler, which is an alternative to Twitter.

Because very little content is barred, alternative social media have become a critical place to track radical activity online. We often see extremist content shared before related real-life radical and violent events take place, such as the Robert Gregory massacre which we mentioned previously. This is the reason why monitoring these sites is critical to the detection of intelligence ahead of radical incidents.

By using Webz.io’s Cyber API, we were able to map several types of Illicit content on alternative social media, so let’s dive in.

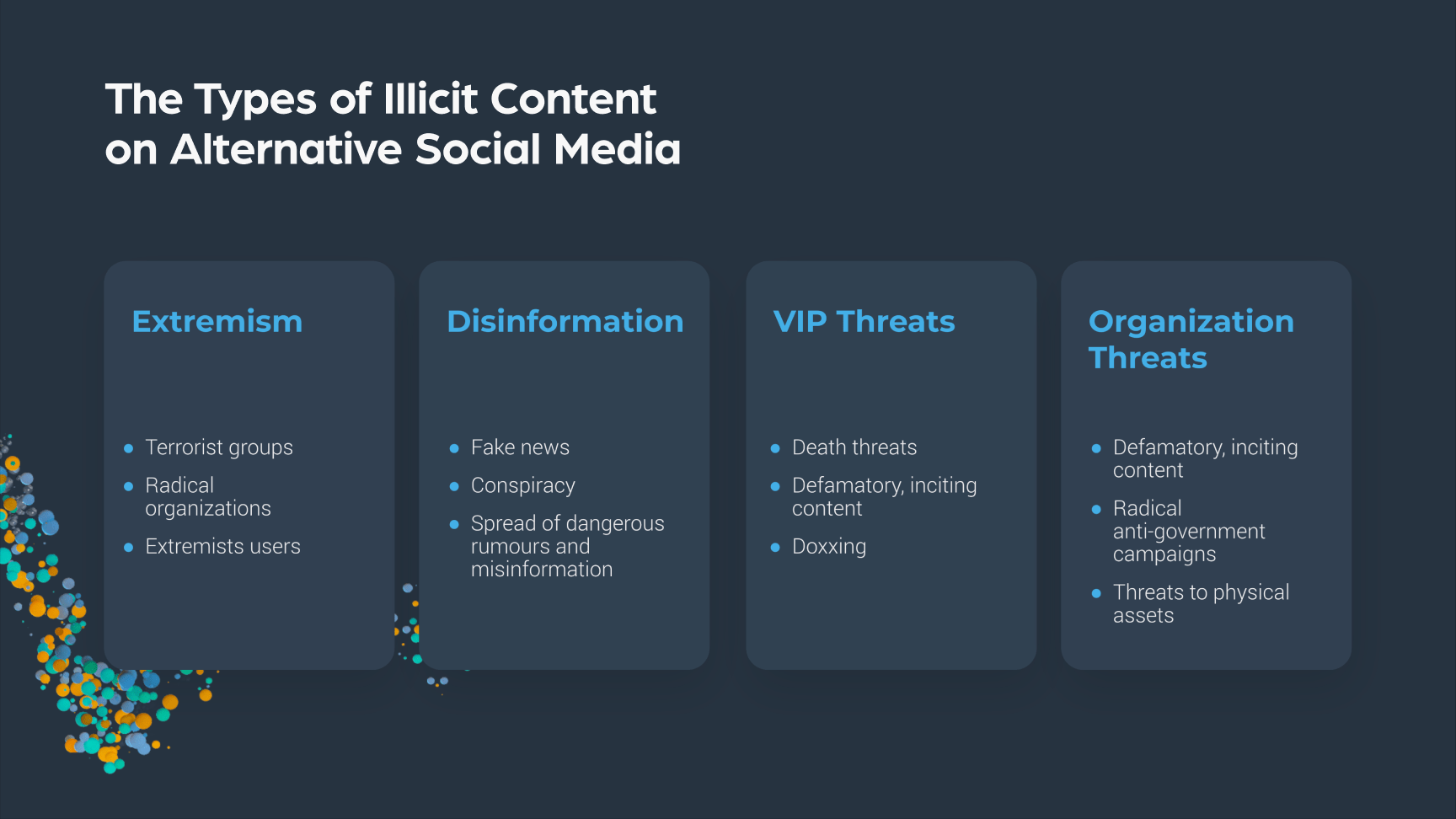

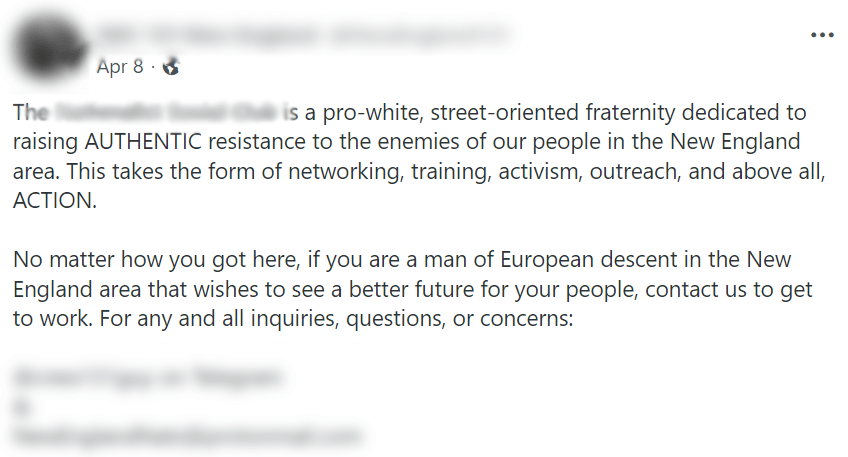

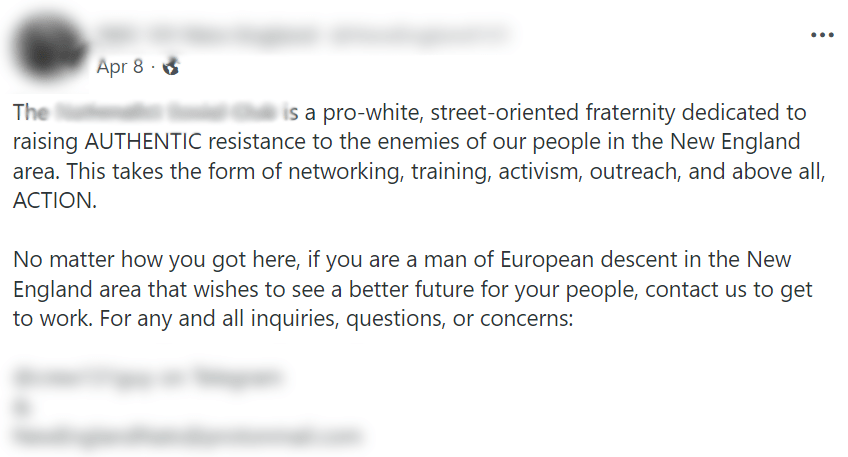

Alternative social media are frequented by many radical organizations and communities such as white supremacists or Neo Nazies. These can be radical individuals or organizations who maintain active groups, pages, and discussions to spread their radical agenda and activities. They often spread their common extremist agenda, inflaming and provoking violence that sometimes crosses over into the physical world in form of hate crimes.

Because of the few content restrictions, alternative social media impose, we find that fake news, conspiracy theories, and rumors spread very quickly on these platforms. This type of disinformation is shared on a daily basis, which in extreme cases can result in physical, violent action.

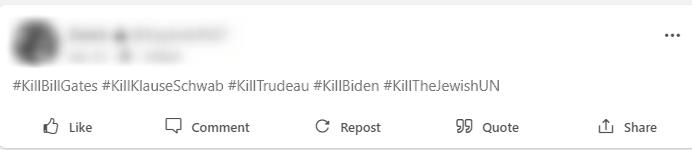

Defamatory, inciting content, and death threats against executives, and VIPs are very common on alternative social media sites. They can relate to news trends, dedicated hate groups, single influencers, or appear as a part of an inflammatory discussion. Another form of threat to executives that is widespread is doxxing. This is a form of cyberbullying, aimed at exposing someone’s private information online. This information might include private home addresses or leaked PII.

Alternative social media platforms also host a variety of threats against organizations and brands.

The threats will usually come in the form of a radical campaign or social trend against a company. In the best-case scenario, the organization will suffer PR damage or limited financial losses. In the worst-case scenario, it can result in violent attacks against the physical assets and employees of a company.

Monitoring illicit alternative social media data is critical to many organizations of all industries, sizes, and regions. There are several ways in which data collected from such sites can be turned into intelligence for organizations, including:

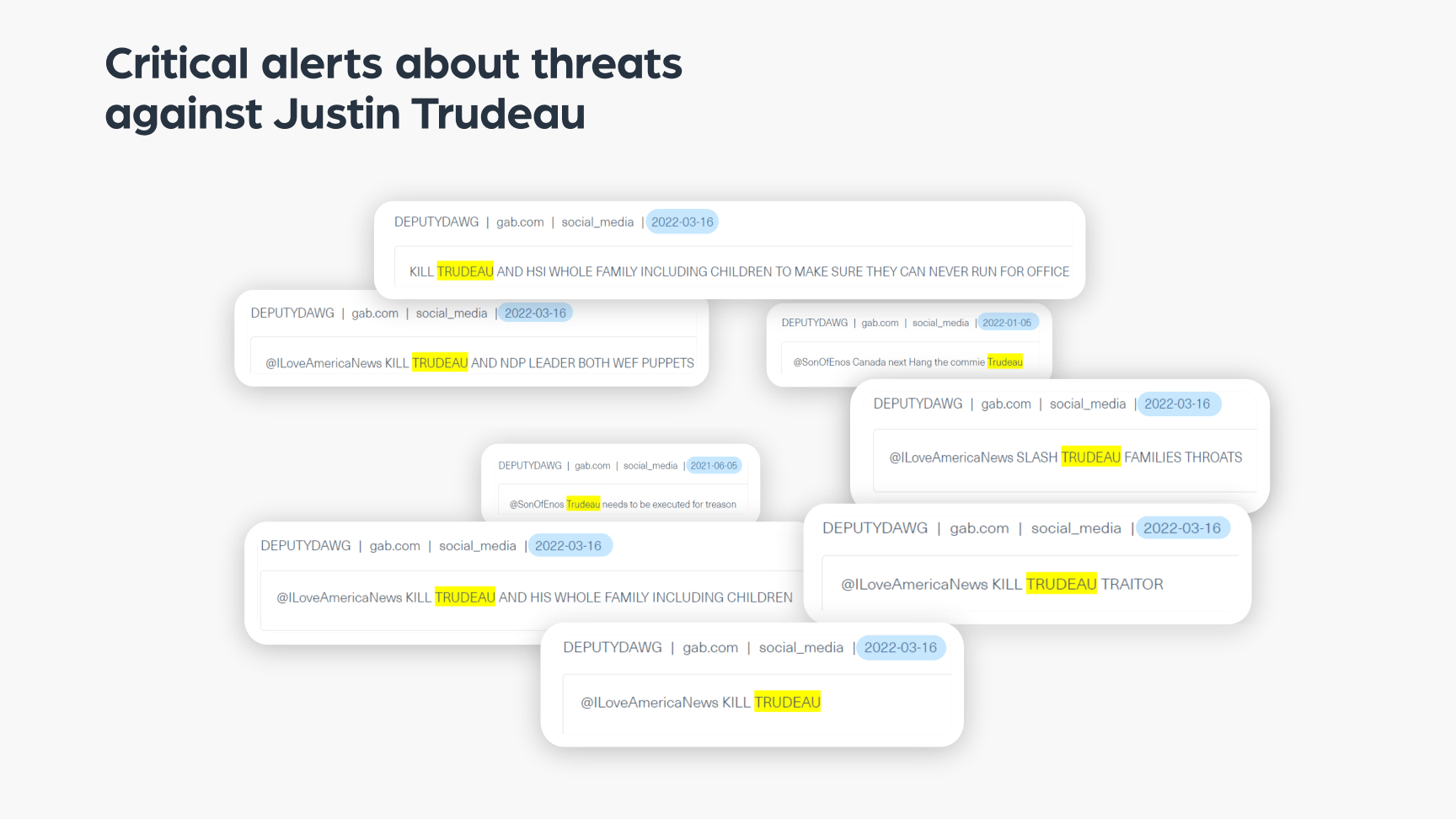

When monitoring data from alternative social media platforms, an organization can find threats, inflammatory statements, and radical manifestoes on a daily basis. Although most of the threat actors who publish that type of content are not planning to actually take someone’s life, some do. This is why it’s essential to track the right discussions online and obtain critical alerts that can save lives.

One way to identify a critical alert is to track threats over time. If a threat actor presents a persistent threat, it can be an indication of a high intent to carry out his violent actions.

In the example below, you can see a few screenshots of posts written by the same threat actor over time. In all of them – there is a direct death threat against Justin Trudeau, the Prime Minister of Canada. Because the threats are persistent over the span of several months, this is an indication that a deeper investigation is required – to determine whether this threat actor poses a real risk.

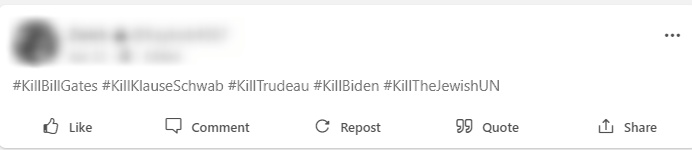

The need to protect a brand or an executive means an organization will need to have eyes everywhere, including on alternative social media. High-level, professional monitoring will include locating relevant pages, hashtags, and profiles on these platforms, dedicated to sharing threats against a certain entity.

To do that, organizations will need to track relevant popular hashtags that are being used in extremist discussions.

Take for example the post below, which was taken from an alternative social media site. You can see it includes several radical hashtags against different figures and minorities, such as Bill Gates, Canadian Prime Minister Justin Thrudeau, U.S. President Joe Biden, Jewish people, and the United Nations.

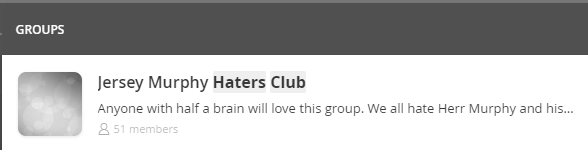

You can find another example of spaces that are key to monitoring hate groups in the next image. It was taken from a different alternative social media site, you can see a group that is dedicated to airing hate speech against Phil Murphy, the Governor of New Jersey.

These two examples are not showing a direct critical threat to the lives of executives or VIPs, but they are examples of how monitoring these platforms can provide early, critical alerts against threats that can later escalate in the real world.

Most of the illicit activity on alternative sites or on the dark web is done anonymously. By collecting various content from different sites, groups, and profiles in real-time, an organization can cross information using aggregations and advanced queries. This is how an organization can trace identifiable information left online by mistake, or even if they were deleted shortly after.

The very basic profiling process will start by identifying the organization or threat actor, including any nicknames or code names. The next step will be to find additional identifiers such as contact details, as you can see in the example below:

Then it’s important to gather any identifiable information: emails, account names, crypto addresses, and more. The information collected will then be used to expand the search.

For example, by searching the email address mentioned in the previous post with Webz.io’s Cyber API, we were able to find hundreds of posts and threat actors linked to that email address. In the example below we can see one of them, which includes even more identifiers that are linked to the organization.

This is only the start of the cross-checks that can be done with enough data. This information can help connect different posts and relevant users – and deanonymize the people behind them.

Alternative social media platforms provide an overview of different trends triggered by political developments, emerging violent protests, and other controversial public events.

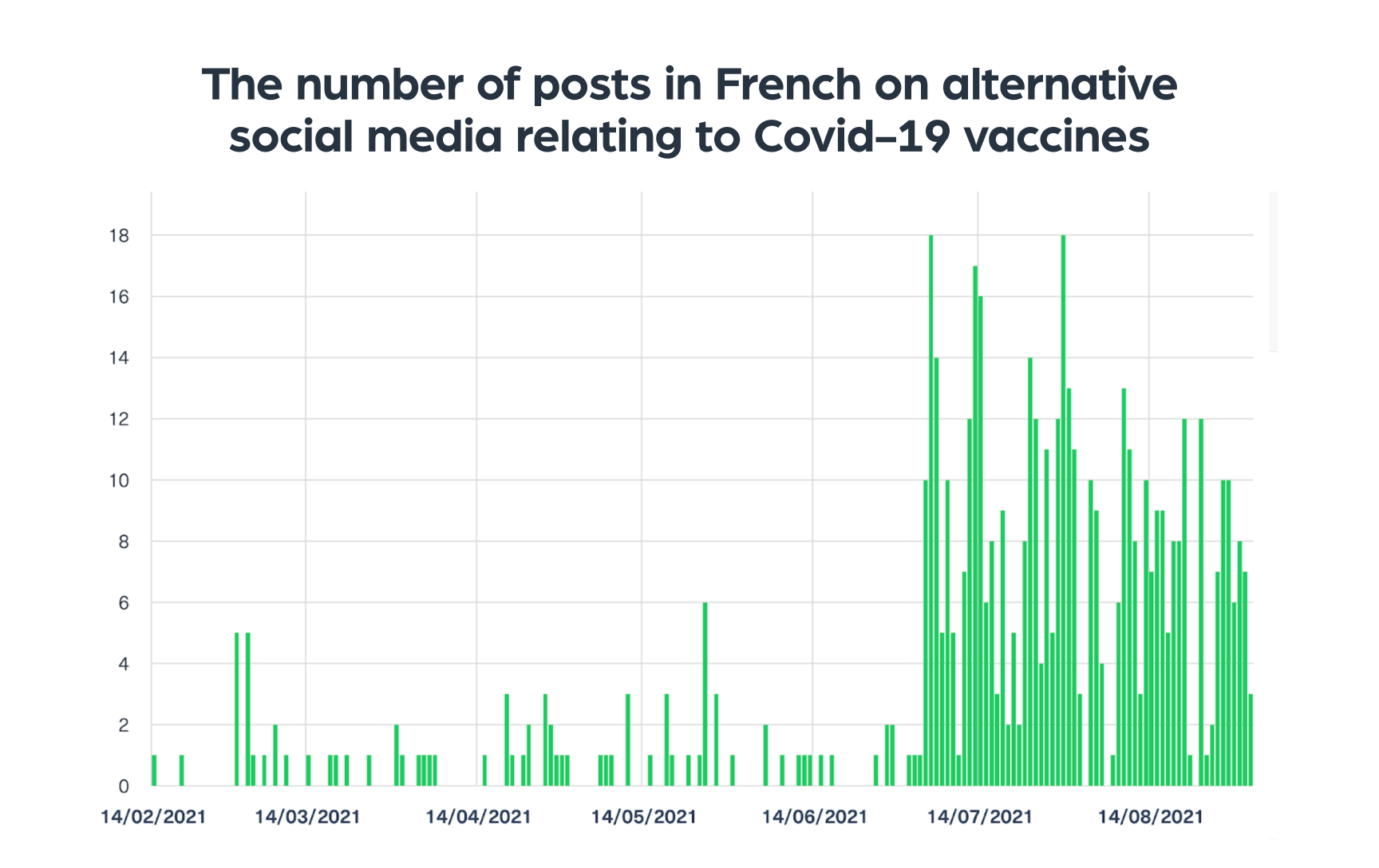

It is important to monitor radical trends as they can easily transform into physical actions in the real world. Because Webz.io’s database contains information from a range of sources, organizations can gain early insights on a topic just by identifying a spike in the content around a topic, which is often a strong indication that further analysis is needed.

How can it be done? The next chart is a good example of how we tracked discussions relating to Covid-19 vaccination in France in 2021. In July 2021, shortly after France announced mandatory vaccinations, we identified a big increase in the number of daily posts discussing Covid-19 and vaccination.

Many posts were inciting and extreme in nature, advocating radical anti-vaccination stances. Only a couple of weeks after the spike, two violent vandalism attacks took place against vaccine centers in France.

Over the past years, new alternative sites were emerging every so often, aiming to replace the mainstream. Rather quickly, we could see that within the alternative social media landscape, some platforms have become popular among radical communities, providing for unregulated platforms to communicate and spread their agenda. Each platform aimed to replace an existing mainstream site, offering similar types of content for users. Alongside, we see some alternative platforms that became mainstream, for example, Mastodon which is becoming popular and aims to replace Twitter. Over the past months, we have seen a decrease in the pace of new sites that are emerging. The need for “free speech” replacements on social platforms has been fulfilled, at least to some extent.

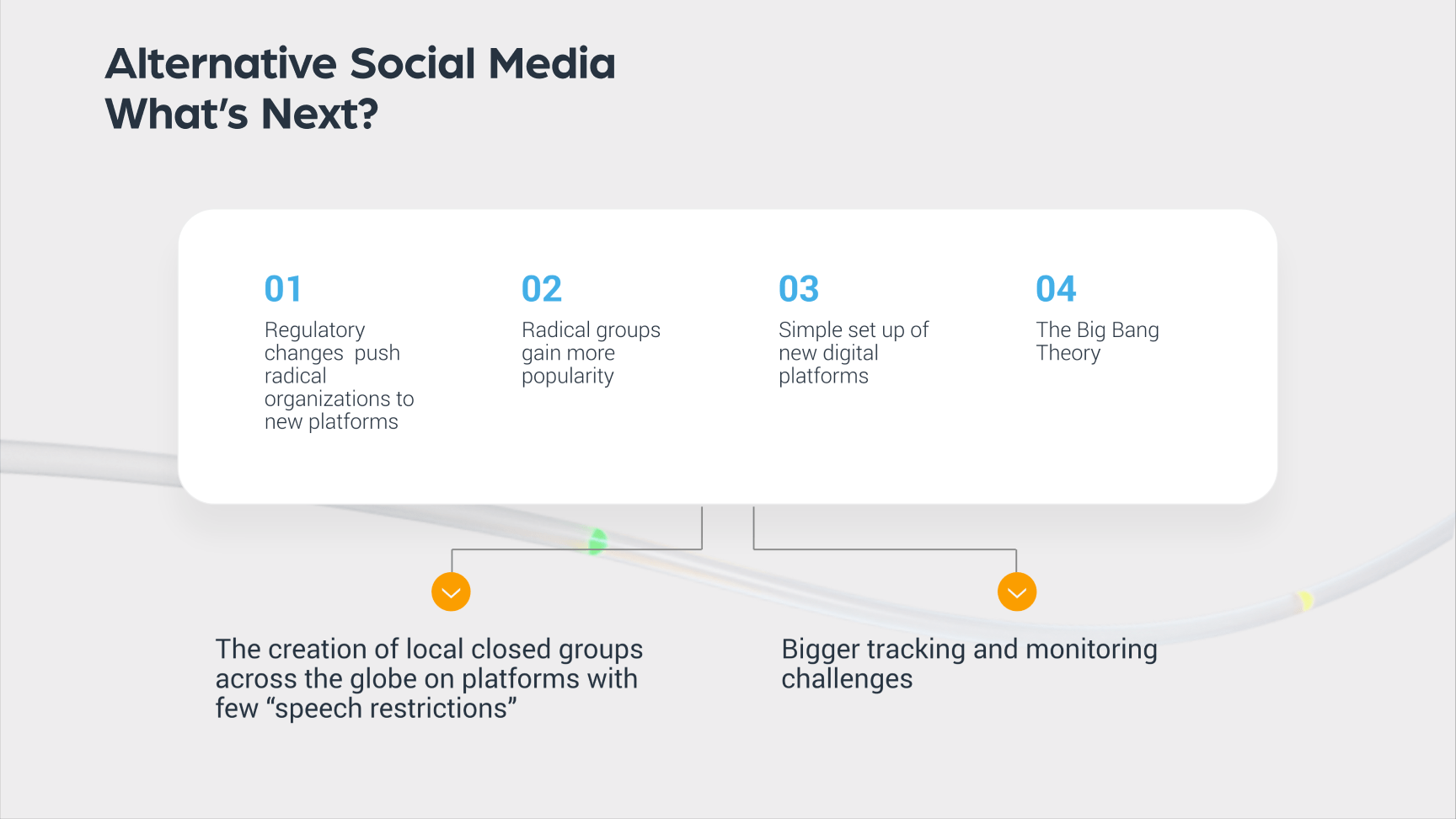

In the distant future, we believe that radical organizations will continue to grow, but they will turn more towards operating in closed groups rather than on public social media platforms. This is mostly because we are likely to see more regulations and legislation limiting such platforms, similar to what mainstream social media sites like Facebook have experienced over the past years.

A precedent to this sort of trend towards closed groups is the move of radical Islamic groups that first operated on Facebook and Twitter to encrypted private platforms, such as Telegram and Rocket chat, as a response to greater regulations and restrictions that barred their activities.

As alternative social media sites continue to attract radicals, monitoring them is key to tracking extremist groups and users, and discovering emerging radical trends and activities. This intelligence helps public and private companies, and governments to predict and mitigate new threats.

Do you use Python? If so, this guide will help you automate supply chain risk reports using AI Chat GPT and our News API.

Use this guide to learn how to easily automate supply chain risk reports with Chat GPT and news data.

A quick guide for developers to automate mergers and acquisitions reports with Python and AI. Learn to fetch data, analyze content, and generate reports automatically.