How to Automate Supply Chain Risk Reports: A Guide for Developers

Do you use Python? If so, this guide will help you automate supply chain risk reports using AI Chat GPT and our News API.

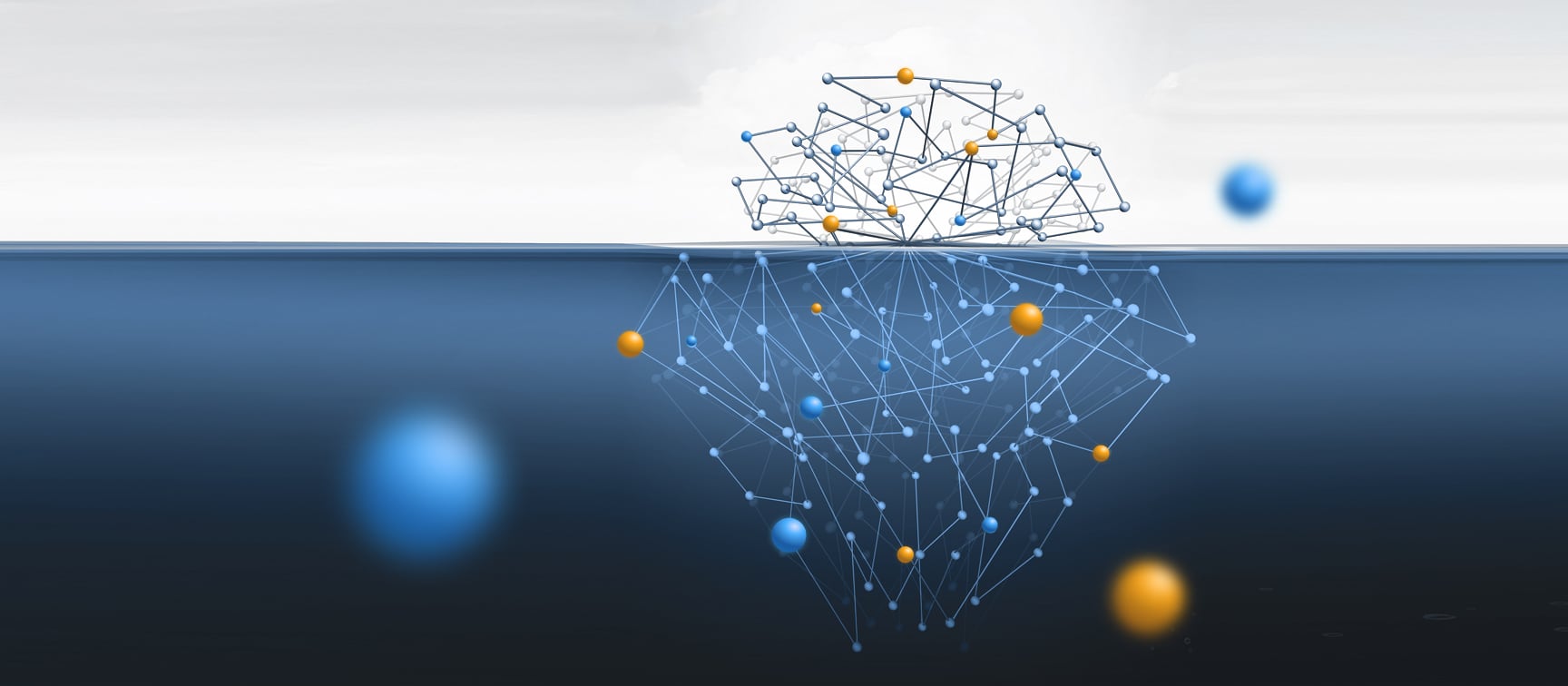

Around 4% of all content online is indexed, or in other words, can be accessed through search engines. The other 96% consists of content that is not indexed, most commonly known as the deep web, which has another, smaller, deeper layer – called the dark web, or the darknet.

But let’s start with the definition of each part of the web before we dive deeper into what really sets them apart.

As mentioned before, the open web is also sometimes called the surface web, or the visible web. This part of the web comprises web pages that are indexed by web crawlers, meaning they appear in the search results of mainstream search engines such as Google, Bing, and Yahoo. These pages can be accessed by every internet user without any real restrictions.

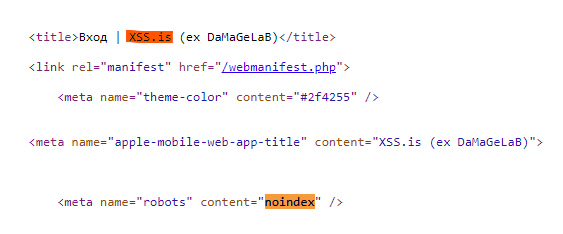

Unlike the open web, the deep web is a part of the web that cannot be accessed by using search engines. These web pages include a meta tag in their HTML code that prevents them from being crawled – or in other words, being indexed.

You can see an example of such a code in the image below, which is a screenshot of xss.is, a known hacker forum. You can see that its HTML source code includes the noindex meta tag:

So no matter how much we will search xss.is on Google or on other search engines, we will only find sites that mention xss.is, but not the site itself.

The deep web includes different sites, some of them host illicit activities, but we will come back to it later on. Alongside them, there are a lot of legitimate pages that are choosing not to be indexed for privacy reasons, take for example the closed groups on Facebook. Another reason to have an unindexed page is for monetary purposes. Some sites have a paywall and require paid subscriptions or registration to access their content.

But not all deep websites are the same. There are sites like xss.is that are completely unindexed and therefore considered to be part of the deep web. Other sites and platforms have some pages that are indexed and others that are unindexed. These sites are defined as partly deep web. Take, for example, Facebook which we mentioned before, some pages on its site are set as private and others as public. In the majority of cases, users can decide which settings to apply and who can view or access their page.

The dark web, or darknet, is the deeper layer of the deep web. It refers to pages that are not indexed, which means they don’t appear on search engine results at all. To access the dark web, users will need special software, configurations, or authorization such as Tor, I2P, or Zeronet. This ensures users can stay anonymous as their IP addresses are hidden. The anonymous nature of the dark web has attracted cybercriminals over the years, and hosts a vast amount of illicit content, in spaces such as marketplaces and hacking forums.

After discussing each layer of the web, it is clear that the main difference between the deep web and the dark web is the level of accessibility users have to their content. Deep websites can be accessed by web browsers and are only partially accessible through mainstream search engines, whereas dark websites are never indexed and require special software and tools.

While these definitions seem very straightforward, they are not necessarily that clear-cut because the web is never that organized and well-defined. So let’s review some exceptions to the general rule by using examples of illicit content we found:

Generally, with some exceptions, we can classify types of illicit content according to where it can be found on the web.

There is a sort of consensus among threat actors on which part of the web they use for different content or activities. For example, drug trafficking will usually take place on dark web marketplaces on the Tor network and on Telegram groups. They most likely will not be found on the open web as they don’t provide sufficient anonymity to operate illegal activities. In a recent post, we touched on this topic and discussed why threat actors have been migrating from the dark web to instant messaging apps partly because these platforms enable anonymity.

On the dark and deep web, you can see separate forums, marketplaces, and instant messaging groups created for different purposes. For example, if we examine content relating to financial fraud topics, we can see that on forums that host leaked credit information you won’t usually find large databases of PII, which have their own dedicated forums for leaked databases of user credentials. Users, therefore, have a selection of forums to choose from for every need, from a carding forum to a hacking forum.

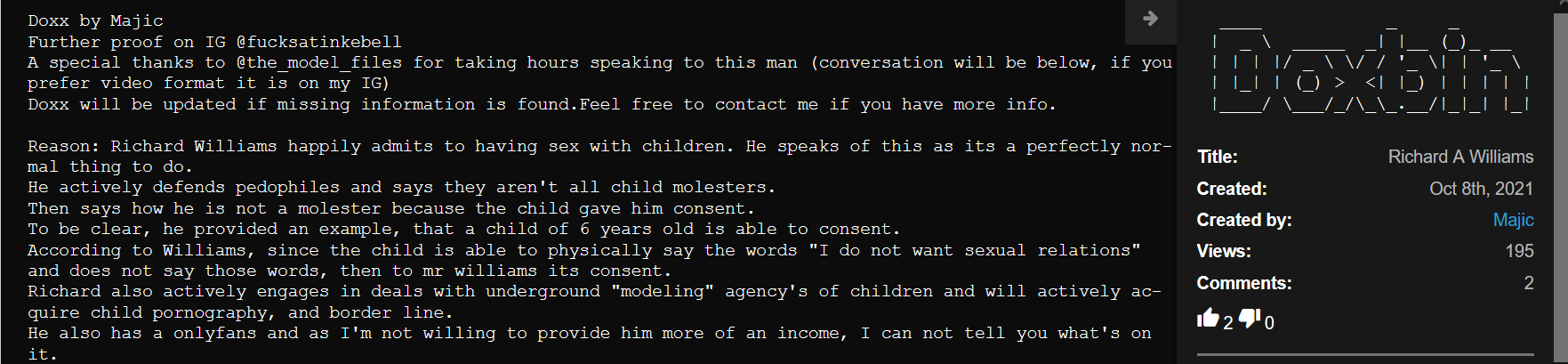

Here is another type of forum dedicated to a different type of leaked PII – doxxing. Doxxing is a form of cyber cyberbullying, which aims to expose someone’s personal information online. This type of leaked PII will not be found on hacking or leak forums. Surprisingly to some, this information will usually appear on the open web in paste sites and forums, and on the deep web on social media.

As we discussed earlier, illicit content can be found on all layers of the web. In the following table, you can find each type of common illicit content sorted according to the layer of the web and site type we usually find them on and the sort of content:

| Type of Illicit Content | Layer of the Web |

| Drug trafficking | Deep web (instant messaging) Dark web (marketplaces) |

| Firearms trafficking | Dark web (marketplaces) |

| Child sexual abuse | Dark web (forums) |

| Terrorist activities | Deep web (social media, instant messaging) Open web (open forums, image boards) |

| Financial fraud | Open web (pastes) Deep web (forums, instant messaging, marketplaces) Dark web (marketplaces, forums) |

| Data leak | Open web (forums, pastes) Deep web (forums, instant messaging) Dark web (forums) |

| Cyber threats (such as hacking ransomware, vulnerabilities, etc.) | Open web (forums) Deep web (forums, instant messaging) Dark web (forums, blogs) |

| Counterfeit | Open web (marketplaces) Deep web (instant messaging) Dark web (marketplaces) |

After discussing in length the layers of the web and the different types of illicit content they host, let’s take a look at an interesting exception – sites that are considered to be both open web and dark web.

This may sound a little contradictory but some sites have two addresses, one on the open web and one on the dark web. We often see this happening with hacking forums, ransomware blogs, and marketplaces that are selling credentials (as opposed to marketplaces that sell drugs and weapons, which will only appear on the dark web).

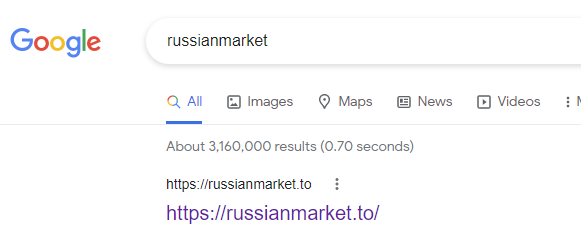

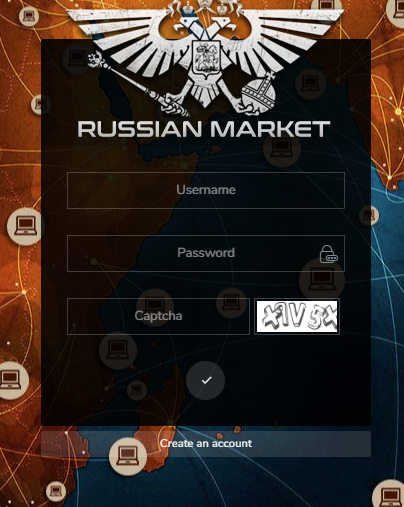

A good example of such a site is Russian Market, which is a marketplace for stolen credentials.

Russian Market can be found on Google, which means that its landing page is indexed and therefore considered to be part of the open web.

But the rest of the site is considered deep web, as it can only be accessed through registration:

Below is a screenshot of the home page of Russian Market, where 5 different links to the site are listed. Two of them are on the Tor network (an address that is not indexed by Google or even accessed by a “regular” web browser, as opposed to the address that was indexed in the screenshot above). This makes it a dark website.

You may wonder what makes these sites keep both dark and open web versions. There are several reasons to run an indexed and an unindexed site:

The main reason to operate an open website is that it is more accessible and stable – which allows admins to gain a wider audience.

The main advantage of running a dark web site is that these sites are more secure and anonymous – users and are safer using the dark web version as their location cannot be identified.

The deep and dark web is a popular space for the cybercriminal community to engage in various activities. The more illicit activity and content the site contains, the more unstable and restricted it usually is. The deeper the web goes, the more challenging it gets to monitor these types of activities At Webz.io, we crawl millions of sites across these different layers of the web to help organizations and law enforcement agencies to monitor illicit content and cybercrime.

Do you use Python? If so, this guide will help you automate supply chain risk reports using AI Chat GPT and our News API.

Use this guide to learn how to easily automate supply chain risk reports with Chat GPT and news data.

A quick guide for developers to automate mergers and acquisitions reports with Python and AI. Learn to fetch data, analyze content, and generate reports automatically.